Imagine a content marketing team at a mid-sized B2B software company. They use an AI writing assistant to draft campaign briefs, a generative AI tool to build persona profiles, and a large language model integrated directly into their CRM to summarize pipeline data. On any given day, they input customer segment details, pricing strategy notes, and competitive intelligence into these platforms without a second thought. Their output is fast, polished, and consistent.

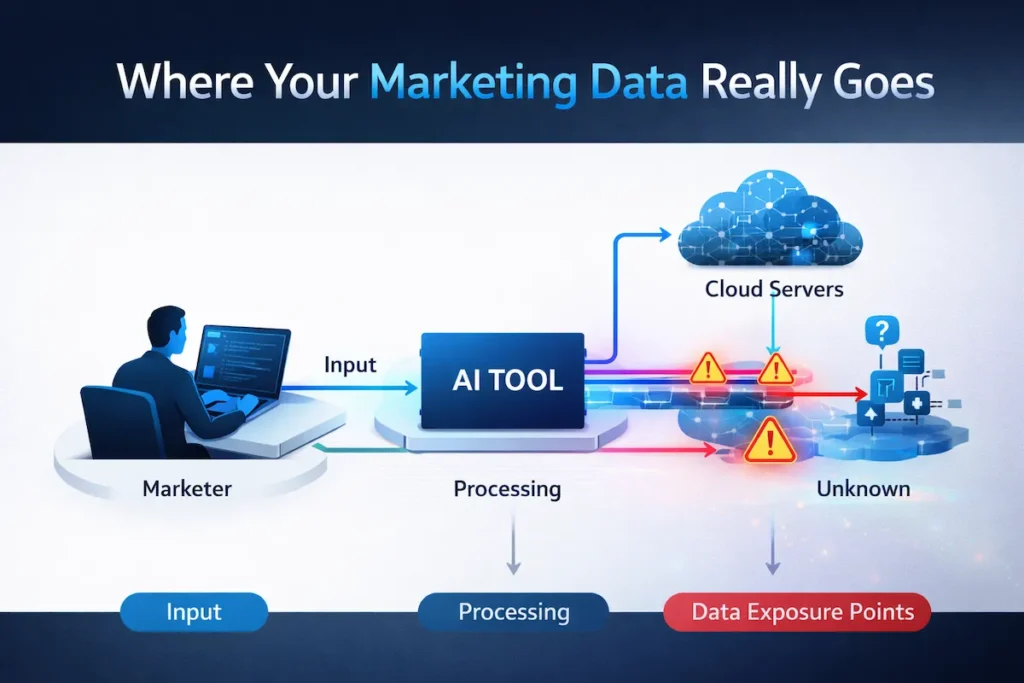

What most teams do not realize is that every AI prompt creates a new, often invisible, data exposure point. Most marketing teams are optimizing for output quality without realizing they are expanding their data exposure surface with every interaction. This growing reliance on AI tools for businesses is introducing serious AI data security risks in marketing that most teams have not yet fully understood.

The Invisible Layer Most Marketing Teams Miss

This is not a hypothetical edge case. It is the daily operational reality for tens of thousands of marketing teams in 2026. The acceleration of AI adoption across content, demand generation, and customer experience functions has created a significant and largely unaddressed gap between how marketing data is used internally and how it is handled once it enters third-party AI systems. This gap creates what can be described as the “prompt-level data exposure layer” — a new category of risk where sensitive marketing data is unintentionally transmitted outside the organization through everyday AI usage, with no logs, no alerts, and no visibility into what was shared or where it went.

The issue is not that AI tools are inherently unsafe. The issue is that marketers were never trained to think about data governance, and AI vendors have not made it easy for them to start. As AI becomes foundational to marketing operations, teams that ignore data hygiene are accumulating compounding risk that will eventually surface as a compliance failure, a brand incident, or worse.

Marketing leaders must begin treating AI adoption as a data governance decision, not just a productivity decision. This hidden layer is one of the most overlooked AI data security risks in marketing today.

Every AI prompt is not just a request — it is a data transfer event.

The Rise of AI in Marketing Workflows (And the Hidden Trade-Off)

AI has become deeply embedded in the marketing function. Teams use it to generate content at scale, personalize email sequences, analyze audience behavior, build competitive research summaries, and automate campaign reporting. According to research from Salesforce, the majority of marketers now use AI in some capacity, and adoption is accelerating with each product release cycle. This aligns with broader industry trends showing AI adoption in marketing report creasing year over year across content, analytics, and customer experience functions — with governance practices lagging significantly behind.

As adoption grows, AI data security risks in marketing are increasing alongside the convenience these tools provide.

The value proposition is straightforward: AI reduces the time required for repetitive tasks, improves output consistency, and enables small teams to operate with the throughput of much larger ones. For marketing leaders facing pressure to produce more with flatter budgets, this is difficult to argue against.

But efficiency and control are not the same thing. When a marketer pastes a customer segment description into an AI prompt to generate a persona document, they are sending data outside the organization’s environment. When they use an AI summarization tool to process internal meeting transcripts, those transcripts leave the controlled perimeter. The value exchange is real, but so is the exposure.

There is also a growing “shadow AI” problem. Just as shadow IT emerged when employees began using unauthorized cloud applications, shadow AI is now appearing in marketing departments where individuals or small teams adopt AI tools independently, outside of IT or legal review. These tools may have no enterprise data agreements, no retention limits, and no audit trail. They are simply useful, so people use them.

Where Marketing Data Actually Goes (And AI Data Security Risks in Marketing)

Understanding the risk starts with understanding the basic flow of data through most AI tools.

When a marketer inputs a prompt, that text travels from the user’s device to the vendor’s servers for processing. The AI model interprets the input, generates a response, and returns it to the user. What happens between those steps varies significantly by vendor and by product tier.

Understanding this flow is critical because AI data security risks in marketing often begin at the point where data leaves internal systems.

Some vendors retain inputs and outputs for model training or quality review. Others offer enterprise agreements that explicitly exclude this, but only if the customer has procured the right tier and configured the settings correctly. Many free or freemium tools retain data by default with minimal controls. Default settings are rarely the most protective settings.

The specific marketing data categories that regularly appear in AI prompts include customer demographic and behavioral data, campaign performance metrics, pricing and competitive positioning, internal strategy documents, and personally identifiable information about prospects or customers. Each of these carries different levels of sensitivity, and most marketing teams have no formal classification for which categories should never enter an AI system.

Without visibility into this process, AI data security risks in marketing remain difficult to detect and control.

5 Real Data Exposure Risks Marketers Overlook

These scenarios highlight real-world AI data security risks in marketing that are often ignored in daily workflows.

1. Sensitive Campaign Data Entering AI Prompts

This is one of the most common examples of AI data security risks in marketing, where strategic information is shared unintentionally.

Marketing professionals routinely paste campaign briefs, budget figures, A/B test results, and go-to-market timelines into AI writing or analysis tools. This data often includes information that would be considered commercially sensitive: pricing thresholds, launch windows, competitive differentiation strategies.

A marketer drafting a product launch announcement might include internal positioning notes, a tentative pricing tier, and references to a feature not yet publicly disclosed. All of that can enter the vendor’s environment.

In one common scenario, a SaaS marketing team inputs a pre-launch pricing model into an AI tool to generate positioning copy. That pricing model — not yet announced publicly — becomes part of an external processing system with no formal tracking, no retention controls, and no record that it was ever shared.

Why it matters: If a vendor’s data handling policies allow for human review of inputs, or if that data is retained and later exposed through a security incident at the vendor’s end, commercially sensitive campaign strategy could become accessible outside the organization.

2. Customer Data Used Without Proper Controls

From a compliance perspective, this represents a serious form of AI data security risks in marketing.

Sales enablement content, persona documents, and email personalization workflows often involve real customer data. A marketer might input a customer’s company name, industry, and pain points to generate a tailored outreach sequence. In some cases, email addresses or contact names are included.

Depending on where those customers are located, this practice may conflict with regional data privacy regulations. GDPR data protection regulations, for example, impose restrictions on how personal data can be processed by third parties, and most standard AI tool subscriptions do not include the data processing agreements required for compliant use.

Why it matters: Regulatory penalties aside, customers who discover that their data was processed through an unvetted third-party AI system without their knowledge are unlikely to respond positively. The brand trust implications are significant.

3. Third-Party AI Tools Without Vendor Transparency

Without transparency, AI data security risks in marketing increase significantly due to unknown data handling practices.

The AI tooling market has expanded rapidly, and not all vendors are equally forthcoming about their data practices. Many tools used in marketing workflows offer minimal documentation about where data is stored, how long it is retained, whether it is used for training, and under what circumstances it may be accessed by vendor employees.

Procurement processes in marketing departments often prioritize features and pricing over privacy documentation. Terms of service for AI tools can run to thousands of words and are rarely reviewed by anyone with legal or compliance expertise.

Why it matters: Cost of a Data Breach Report by IBM Security consistently finds that third-party involvement in a breach significantly increases the average cost and complexity of response. For marketing teams, the reputational exposure from a vendor-side incident involving customer data can extend well beyond the financial damage.

4. Lack of Internal AI Usage Policies

This lack of structure is a major driver of AI data security risks in marketing across growing teams.

Most marketing teams have no written policy governing how AI tools should be used. There is no approved tool list, no guidance on what data categories are permitted in AI prompts, and no process for evaluating new tools before they are adopted.

This is not a failure of intent. It reflects the speed at which AI tools have become available and the gap between marketing’s pace of adoption and legal, IT, and compliance teams’ capacity to keep up. The result is an environment where usage is driven entirely by individual judgment, which is inconsistent by definition.

Why it matters: Without policy, there is no accountability. When a data exposure incident occurs, organizations without documented policies face greater difficulty demonstrating due diligence to regulators, customers, or partners.

5. Over-Reliance on AI Without Human Review

Over-automation further amplifies AI data security risks in marketing when outputs are not properly reviewed.

AI tools occasionally produce outputs that contain information the tool has encountered in its training data or that reflects a misinterpretation of the input. In some cases, AI-generated content includes details that appear plausible but are factually incorrect or, more dangerously, are actually drawn from other organizations’ proprietary data that may have been part of a training corpus.

Beyond accuracy, marketers who use AI to generate communications without thorough human review risk publishing content that inadvertently discloses internal information, misrepresents a partner or customer, or violates brand guidelines in ways that carry legal exposure.

Why it matters: AI output is a starting point, not a finished product. Teams that skip substantive review as a matter of workflow efficiency are creating a liability that compounds over time.

What This Means for Content Marketing Teams in 2026

The conversation among marketing leaders has shifted. Early AI adoption was primarily framed around what tools could do. The more mature conversation is now about how to adopt AI in ways that do not create downstream problems. To better understand the scale of these risks, reviewing AI-driven cybersecurity statistics can provide valuable insights into how rapidly these threats are evolving.

For content teams, these AI data security risks in marketing are no longer theoretical — they are operational. This shift has practical implications for three areas most marketing leaders care about.

Brand trust is increasingly tied to data stewardship. Consumers and B2B buyers are more aware of data practices than they were even two years ago. A brand that is known to handle data carelessly, whether through a regulatory incident or a public disclosure, loses credibility that is difficult to recover.

Compliance exposure is growing as regulations expand and enforcement becomes more active. Marketing teams that assumed data governance was an IT problem are finding that they are in scope for regulatory obligations tied to how they use and process data in their workflows, including AI workflows.

Data ownership is emerging as a strategic concern. When proprietary research, customer insights, or competitive intelligence enters a third-party AI system without appropriate controls, the organization may lose some practical control over that intellectual property. Understanding vendor agreements and data rights is now a marketing operations issue, not just a legal one. Ignoring these AI data security risks in marketing can lead to long-term operational and reputational consequences.

A Practical Framework for Secure AI Adoption in Marketing

Organizations do not need a complex governance program to reduce the most significant risks. This can be implemented as a Secure AI Marketing Framework built on three layers. Think of it as a simple stack: policy defines the boundaries, access control enforces them, and monitoring ensures continuous accountability — each layer dependent on the one beneath it.

This framework directly addresses AI data security risks in marketing by helping teams control how data is shared and processed. Implementing this framework helps reduce AI data security risks in marketing across all levels of the organization.

1. Policy Layer (What Teams Are Allowed to Input Into AI)

Define, in writing, what categories of data may and may not be used as AI inputs. A simple classification — public, internal, confidential, restricted — provides the foundation. Any data in the confidential or restricted category should be explicitly excluded from AI prompts in non-enterprise tools.

Distribute this policy to the entire marketing team, not just managers. Include concrete examples of what is and is not acceptable, since abstract rules are rarely followed consistently.

2. Access Control Layer (Who Can Use Which Tools)

Establish an approved tool list that has been reviewed by IT, legal, and security teams for enterprise data agreements, retention policies, and compliance documentation. Any tool not on the list requires approval before use.

This does not mean limiting innovation. It means building a review process that is faster than ad hoc adoption, which is possible when the evaluation criteria are clearly defined in advance.

3. Monitoring Layer (Tracking Usage and Risks)

Implement basic visibility into what AI tools are being used across the marketing function. This can be as simple as periodic self-reporting or as sophisticated as network-level monitoring for unsanctioned tool access. The goal is to surface shadow AI usage before it creates exposure, not after.

Conduct quarterly reviews of the approved tool list as vendors update their terms and new tools enter the market.

How Leading Marketing Teams Are Adjusting Their AI Strategy

The organizations managing this transition most effectively share a few common characteristics in how they approach AI marketing automation.

Leading organizations are adapting quickly as AI data security risks in marketing become a critical part of strategic decision-making.

They have developed internal AI guidelines that are specific to the marketing function, rather than relying on organization-wide policies that do not account for the particular data types marketing teams work with.

They maintain an approved tool list with clear rationale for inclusion, which also serves as a useful signal to vendors that enterprise-grade data protections are a procurement requirement, not a nice-to-have.

They have introduced data classification practices within their marketing operations workflow, so that the team understands which assets carry heightened sensitivity before those assets are used in AI-assisted processes.

These are not expensive or technically complex interventions. They are primarily organizational and cultural changes that require leadership prioritization more than infrastructure investment.

The biggest misconception in this space is that AI risk is a technical issue. In reality, it is a behavioral issue inside marketing teams — driven by convenience, speed, and a lack of visibility into what “sending a prompt” actually means from a data perspective. The technology is not the problem. The habits built around it are.

Key Takeaways for Marketing Leaders

To effectively manage AI data security risks in marketing, leaders must align technology usage with clear governance practices.

- AI tool adoption in marketing has outpaced data governance practices at most organizations, creating exposure that will become increasingly visible as regulatory scrutiny increases.

- The most common risks involve sensitive campaign data, customer information, and the use of unvetted third-party tools without enterprise data agreements.

- Default settings in AI tools are rarely the most protective settings; enterprise agreements and explicit configuration are required to reduce data retention risk.

- A three-layer governance framework — policy, access control, and monitoring — provides an actionable starting point that does not require significant technical investment.

- Marketing leaders need to own data governance in their function rather than waiting for IT or legal to address it on their behalf.

- Vendor transparency on data handling should be a standard procurement criterion, not an afterthought.

Frequently Asked Questions

What are AI data security risks in marketing?

AI data security risks in marketing refer to the potential exposure of sensitive business, campaign, or customer data when using AI tools that process inputs through external systems.

How do AI tools create data exposure risks for marketers?

AI tools can create data exposure when information entered into prompts is processed, stored, or transmitted through third-party systems, increasing AI data security risks in marketing workflows.

Why are AI data security risks in marketing increasing in 2026?

As more businesses adopt AI tools for content creation, analytics, and automation, AI data security risks in marketing are growing due to a lack of clear governance and data handling practices.

How can marketing teams reduce AI data security risks?

Marketing teams can reduce AI data security risks in marketing by implementing internal policies, using approved tools, and monitoring how data is shared across AI systems.

Are free AI tools riskier for marketing data security?

Yes, many free tools have fewer controls and may retain user data by default, which increases AI data security risks in marketing compared to enterprise-grade solutions.

What is the biggest mistake marketers make with AI tools?

One of the biggest mistakes is assuming AI tools are risk-free, while ignoring AI data security risks in marketing related to data sharing, compliance, and lack of visibility.

Conclusion

The productivity gains from AI in marketing are real. Dismissing them in the name of caution would be an overcorrection. But treating AI tools as consequence-free utilities — tools that you simply use without thinking about where your data goes — is a posture that carries growing risk.

The discipline most marketing teams need is not a deep technical understanding of AI infrastructure. It is a working knowledge of data responsibility: what data their teams handle, which data carries elevated sensitivity, and what protections need to be in place before that data enters any external system.

The organizations that build that discipline now are not just reducing risk. They are establishing a governance foundation that will make their AI adoption more sustainable, more defensible, and more trustworthy as the technology continues to evolve.

Understanding AI data security risks in marketing is no longer optional — it is becoming a core responsibility for modern marketing teams.

Organizations that proactively address AI data security risks in marketing will be better positioned to scale AI safely. The future of AI in marketing will not be defined by how fast teams adopt it — but by how responsibly they use it.

2 thoughts on “How AI Tools Are Quietly Exposing Marketing Data: AI Data Security Risks in Marketing (2026)”

Comments are closed.