When your factory floor sensor needs a decision in 10 milliseconds, waiting for a data center in Virginia simply isn’t an option. That’s the core tension driving the edge computing vs. cloud computing debate—and it’s one that U.S. businesses can no longer afford to ignore.

By 2026, the question isn’t which technology is better. It’s about which one is right for your specific workload, budget, and growth strategy. This article breaks down the real differences in edge vs. cloud architecture, maps out use cases, and gives you a practical framework to make the right call.

According to Gartner, by 2027 nearly 75% of enterprise-generated data will be processed outside traditional data centers — a clear signal that distributed computing at the edge is no longer optional for forward-thinking organizations.

Key Takeaways

- Edge computing processes data near the source to eliminate latency — essential for IoT devices, real-time systems, and remote deployments.

- Cloud computing provides massive scalability, global infrastructure, and rich tooling at a low upfront cost.

- Hybrid architectures combining edge and cloud are fast becoming the standard enterprise cloud strategy in 2026.

- IoT and real-time applications benefit most from edge deployments, while SaaS, analytics, and AI training belong in the cloud.

- Security and compliance requirements should heavily influence your final architecture decision.

What is edge computing in edge computing vs. cloud computing?

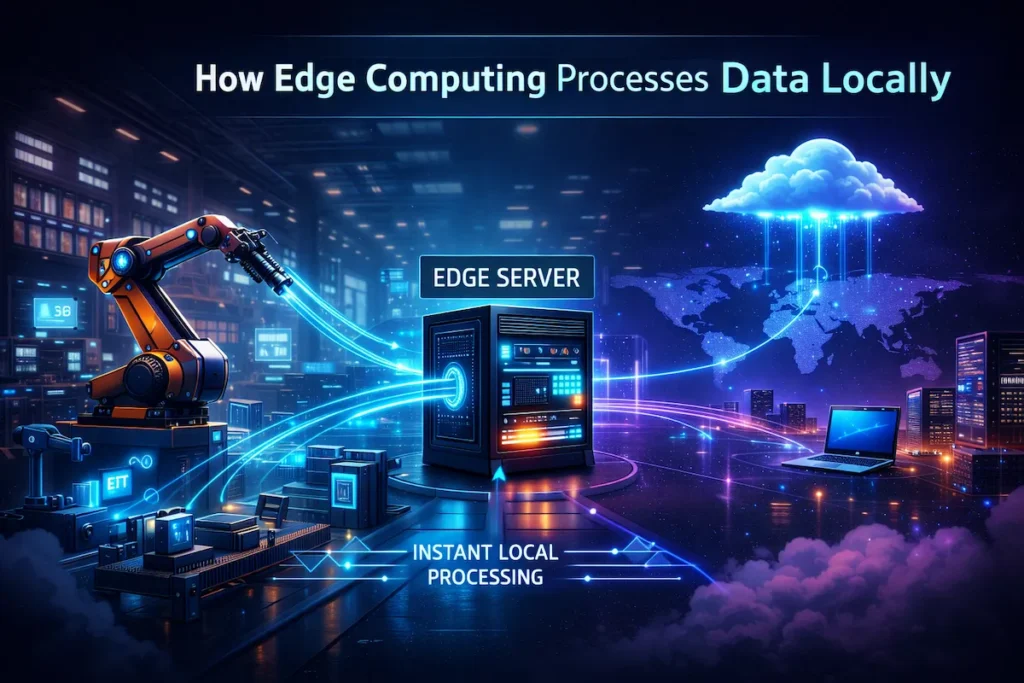

Edge computing is a distributed computing model that moves data processing closer to where data is actually created — on-site at a factory, inside a retail store, within a connected vehicle, or at the base of a 5G tower. For a deeper technical explanation, see edge computing explained by AWS.

Instead of sending raw data to a remote data center, edge devices handle analysis and decision-making locally. The term “edge” refers to the network’s edge—the point where devices connect to the broader internet—and it’s the architectural opposite of centralized cloud infrastructure.

How Edge Computing Works in Edge Computing vs Cloud Computing

Edge systems deploy small, ruggedized compute nodes — edge servers, gateways, or IoT devices — at or near the data source. These nodes run lightweight AI models, filter data, execute logic, and send only relevant results back to a central cloud or on-premises system.

A real-world example: A U.S. automobile manufacturer using smart manufacturing deploys edge nodes on the assembly line. Cameras capture partial images every second. The edge node runs a defect-detection model locally, flags issues in real time, and sends only exception reports to the cloud. No bottleneck. No latency. No massive bandwidth bill.

Key Benefits of Edge Computing vs Cloud Computing

When speed and proximity matter, edge delivers clear advantages over centralized cloud infrastructure:

- Ultra-low latency: Processing happens within milliseconds—non-negotiable for autonomous vehicles, surgical robotics, or real-time fraud detection at point-of-sale.

- Reduced bandwidth costs: Only processed data travels the network, cutting data transfer costs significantly for large IoT deployments.

- Offline resilience: Edge nodes continue operating during internet outages — reliable for remote sites like oil rigs, mines, or rural logistics hubs.

- Data sovereignty: Sensitive data can be processed locally and never leave a facility, simplifying HIPAA and CCPA compliance for U.S. healthcare and financial firms. See our Cloud Security Tips: Protecting Your Data Online for practical guidance on keeping data secure at every layer.

- 5G synergy: The 5G rollout across the U.S. makes edge computing even more powerful, enabling near-real-time performance at mobile endpoints.

Limitations of Edge Computing vs Cloud Computing

The trade-offs in edge vs. cloud architecture are real, and edge has clear constraints:

- Higher upfront hardware costs: Deploying edge nodes across multiple locations requires capital investment in physical infrastructure.

- Management complexity: Hundreds of distributed edge devices are harder to patch, monitor, and secure than a single cloud tenant.

- Limited scalability: Edge hardware has finite compute capacity—scaling means buying and deploying more physical units.

- Vendor fragmentation: The edge hardware market is less standardized than cloud platforms, creating integration headaches for IT teams.

What is cloud computing in edge computing vs. cloud computing?

Cloud computing is the delivery of on-demand computing resources—servers, storage, databases, networking, and software—over the internet from large-scale data centers run by providers like AWS, Microsoft Azure, or Google Cloud. It’s centralized computing on a massive scale. Microsoft provides a detailed overview of the official definition of cloud computing and how centralized infrastructure powers modern applications.

For a comprehensive introduction, read our Cloud Computing Business Guide 2026.

How Cloud Computing Works in Edge Computing vs Cloud Computing

Cloud infrastructure pools compute resources across thousands of servers in geographically distributed data centers. Users access these resources via APIs or web interfaces, paying only for what they consume. Data travels from devices or on-premises systems to cloud data centers for processing, and then results return via the internet.

Types of Cloud Models in Edge Computing vs Cloud Computing

Understanding cloud model types is essential in any cloud computing vs. edge computing comparison:

- Public Cloud: Shared infrastructure managed by providers (AWS, Azure, GCP). Most cost-effective for standard workloads.

- Private Cloud: Dedicated infrastructure, on-premises or hosted. More control — common in regulated industries.

- Hybrid Cloud: A mix of public and private cloud, often integrated with edge nodes. The dominant enterprise cloud strategy in 2026.

- Multi-Cloud: Using multiple public cloud providers to avoid vendor lock-in and optimize cost or performance.

Not sure which model fits your organization? Our Private vs. Public Cloud Computing Guide 2026 walks through the decision in detail.

Benefits of Cloud Computing in Edge Computing vs Cloud Computing

Cloud computing delivers advantages that distributed vs. centralized computing comparisons often undersell:

- Virtually unlimited scalability: Spin up hundreds of servers in minutes and scale globally without buying a single rack.

- Lower operational overhead: Cloud service providers handle hardware maintenance, security patching, and infrastructure upgrades.

- Rich AI and analytics tooling: Mature AI workloads, machine learning pipelines, and big data services that would cost millions to replicate on-premises.

- Global reach: Deploy applications across regions with low effort, reaching U.S. and international customers from a single platform.

- Pay-as-you-go economics: No upfront capital — ideal for startups and businesses with variable workloads.

Limitations of Cloud Computing in Edge Computing vs Cloud Computing

No honest analysis glosses over Cloud’s weaknesses:

- Latency is a structural constraint: Even a well-optimized cloud call adds 20–100ms of round-trip data latency. For real-time applications, this is unacceptable.

- Ongoing costs grow with scale: large data transfers and high compute hours can make the cloud expensive at the enterprise scale. Explore the Best Cloud Storage Alternatives of 2026 if cost is a concern.

- Internet dependency: Cloud-reliant systems fail when connectivity drops — a serious risk for industrial or remote deployments.

- Data sovereignty concerns: Sending sensitive data to third-party data centers creates compliance complexity. Learn what can go wrong in Top Cloud Security Mistakes Companies Still Make.

Core Differences in Edge Computing vs Cloud Computing

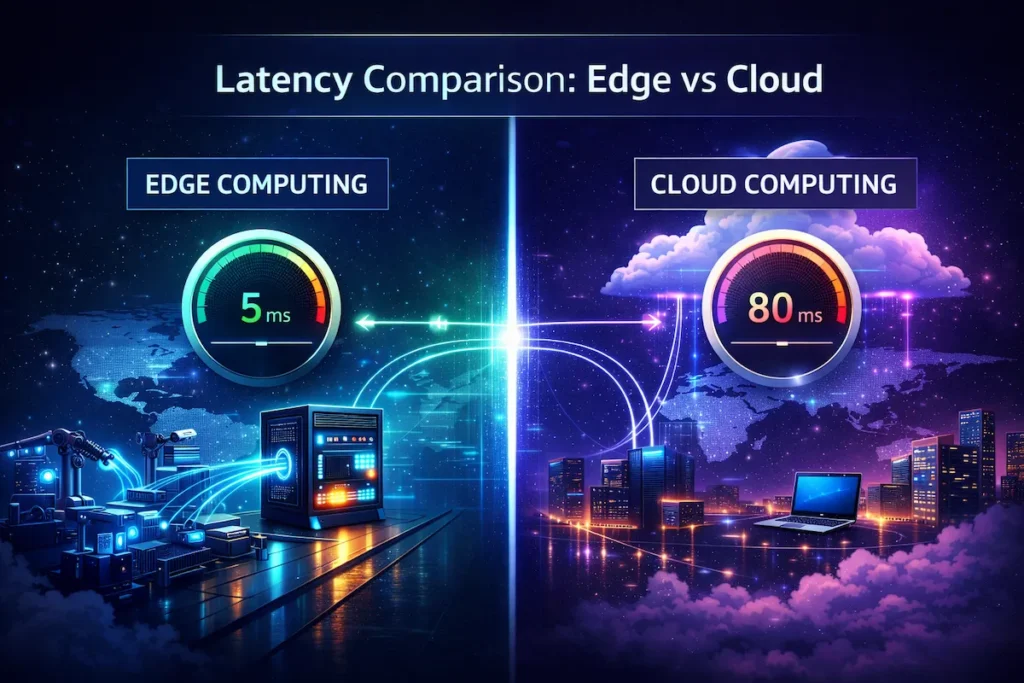

Latency Comparison in Edge Computing vs Cloud Computing

This is where the differences are most dramatic. Edge computing delivers sub-10ms latency for local processing. Cloud typically adds 20–150ms depending on geographic distance from the data center.

For applications like autonomous machinery, real-time bidding, or remote surgery, that gap is the difference between functional and dangerous.

Performance and Speed in Edge Computing vs Cloud Computing

In edge vs cloud performance, edge wins for time-sensitive, localized tasks. Cloud wins for complex, compute-intensive batch workloads — training large AI models, running analytics across petabytes of data, or rendering video at scale.

The best architectures in 2026 use both, routing workloads based on latency requirements.

Security Differences in Edge Computing vs Cloud Computing

Edge vs cloud security is nuanced. Cloud platforms invest billions in perimeter security, compliance certifications, and threat intelligence. But centralizing data creates a single high-value target. Organizations often follow the NIST cloud computing security framework to ensure strong security controls in cloud environments.

Edge distributes data, reducing breach impact — but each edge node is an additional attack surface that must be hardened and patched. For regulated U.S. industries, edge can reduce compliance exposure by keeping data local. Before committing to either model, review the Top Cloud Security Mistakes Companies Still Make to avoid the most common pitfalls in cloud and hybrid deployments.

Cost Structure in Edge Computing vs Cloud Computing

Cloud follows an OpEx model — pay monthly, scale as needed, no capital outlay. Edge requires CapEx investment in hardware, plus ongoing maintenance costs.

For high-volume, steady-state IoT deployments, edge often becomes cheaper over a three-year horizon. For unpredictable or bursty workloads, cloud’s variable pricing wins.

Scalability in Edge Computing vs Cloud Computing

Cloud is the clear winner. Adding capacity is a configuration change. Edge scaling means procuring, shipping, and deploying physical hardware — a weeks-long process.

Enterprises planning rapid IoT expansion should factor this constraint into their edge vs cloud architecture decisions early.

Infrastructure Requirements in Edge Computing vs Cloud Computing

Cloud requires only an internet connection and a subscription. Edge requires on-site power, physical security, network connectivity, and IT staff — or a managed service provider — capable of maintaining distributed hardware.

This is often the deciding factor for small businesses evaluating which model to pursue.

Comparison Table for Edge Computing vs Cloud Computing

| Feature | Edge Computing | Cloud Computing |

|---|---|---|

| Latency | Sub-10ms (local processing) | 20–150ms depending on network distance |

| Scalability | Limited by physical hardware | Near-unlimited on-demand scaling |

| Upfront Cost | High (CapEx investment) | Low (pay-as-you-go OpEx) |

| Ongoing Cost | Lower at high volume | Grows with usage |

| Internet Dependency | Can operate fully offline | Requires internet connectivity |

| Data Privacy | High (local processing) | Varies by provider and configuration |

| Management Complexity | High (distributed nodes) | Lower (managed by provider) |

| Best For | IoT, real-time systems, remote sites | SaaS, analytics, AI training |

| 5G Integration | Excellent | Moderate |

| Compliance Control | High | Moderate–High |

Business Decision Matrix

Use this matrix to quickly map your business requirement to the right architecture in the cloud computing vs edge computing decision:

| Business Requirement | Best Option |

|---|---|

| Real-time processing under 20ms | Edge Computing |

| Global SaaS platform | Cloud Computing |

| Industrial IoT deployments | Edge + Cloud Hybrid |

| Large-scale AI model training | Cloud Computing |

| Remote locations with weak internet | Edge Computing |

| Variable or unpredictable workloads | Cloud Computing |

| On-site data privacy and compliance | Edge Computing |

| Rapid startup scaling | Cloud Computing |

| Smart manufacturing operations | Edge + Cloud Hybrid |

| Cost-sensitive storage at scale | Cloud (with alternatives) |

When Businesses Should Choose Edge Computing vs Cloud Computing

Small Businesses

For most small businesses, cloud wins. The low upfront cost, zero infrastructure management, and access to enterprise-grade tools via platforms like AWS or Azure make it the practical default.

Unless your small business runs physical sensors, point-of-sale hardware at scale, or operates in a low-connectivity environment, cloud is sufficient and far simpler to manage.

Enterprises

Large enterprises benefit most from a hybrid approach. Manufacturing, logistics, healthcare, and financial services firms increasingly deploy edge nodes for operational technology — assembly line monitoring, fleet telematics, patient monitoring — while relying on cloud for ERP, analytics, and customer-facing applications.

According to AWS Edge Computing documentation, enterprises running hybrid architectures report significant reductions in data transfer costs at scale.

Startups

Startups should default to cloud. Speed to market, cost flexibility, and access to managed AI and database services outweigh any latency benefits at early stage.

Revisit edge infrastructure when workloads justify it — typically when IoT deployments exceed thousands of devices or real-time processing becomes a core product requirement.

IoT and Real-Time Applications

This is where edge computing wins outright. IoT devices generating continuous sensor data — smart agriculture, connected medical devices, industrial automation, autonomous vehicles — cannot tolerate cloud round-trip latency. Technologies like edge computing for IoT devices enable real-time data processing directly at the device level.

Fog computing architectures, where edge nodes serve as intermediaries between IoT devices and cloud backends, have become the standard pattern for these deployments, especially on 5G networks.

Hybrid Model: Combining Edge Computing vs Cloud Computing

The most competitive businesses in 2026 don’t choose between edge and cloud — they architect both into a unified system. Many enterprises now adopt a hybrid cloud architecture that combines local edge processing with centralized cloud analytics.

The pattern is consistent: edge handles time-sensitive, local decisions; cloud handles storage, AI model training, business intelligence, and global coordination.

A U.S. retail chain might deploy edge nodes at each store for real-time inventory tracking and local payment processing, while the cloud aggregates sales data, trains demand forecasting models, and runs the customer loyalty platform. This hybrid cloud architecture reduces latency where it matters, cuts bandwidth usage, and preserves the operational flexibility cloud provides.

The key is designing clear data boundaries — what gets processed locally, what gets synchronized to the cloud, and how often. That architectural clarity is what separates clean hybrid deployments from unmanageable sprawl.

Pros and Cons of Edge Computing vs Cloud Computing

| Model | Pros | Cons |

|---|---|---|

| Edge Computing | Ultra-low latency, works offline, reduces bandwidth usage, strong data privacy, 5G and IoT integration | High upfront CapEx, complex to manage at scale, limited compute capacity, hardware fragmentation |

| Cloud Computing | Infinite scalability, low upfront cost, global infrastructure, rich AI tooling, zero hardware management | Latency limitations, internet-dependent, costs rise at volume, data sovereignty risks |

Future Trends in Edge Computing vs Cloud Computing (2026–2030)

The edge computing vs cloud computing landscape is converging, not diverging. Five trends are shaping the next four years:

AI at the Edge: On-device AI inference is accelerating. Chips from NVIDIA, Qualcomm, and Intel now run sophisticated models locally, enabling edge nodes to make complex decisions without cloud round-trips. The emerging pattern is clear — train in the cloud, infer at the edge.

5G-Driven Edge Expansion: As 5G networks roll out across U.S. metro and rural areas, mobile edge computing (MEC) becomes viable at scale. Carriers are building edge compute directly into 5G infrastructure, making the network itself a distributed computing platform.

Standardization of Edge Platforms: Fragmentation is easing. Platforms like AWS Outposts, Azure Arc, and Google Distributed Cloud now extend cloud management tooling to edge environments, closing the operational complexity gap in edge vs cloud architecture.

Edge-Native Security: Zero-trust architectures are being adapted for distributed edge environments. Expect mature, automated security frameworks for edge deployments to become standard by 2028.

Fog Computing Maturity: Fog computing — a layered architecture bridging IoT endpoints and cloud backends — is maturing rapidly, becoming the default pattern for industrial digital transformation programs.

Frequently Asked Questions

Q1: Is edge computing replacing cloud computing?

No. This is not a replacement story — it’s a complement. Edge handles latency-sensitive, local tasks; cloud handles scale, analytics, and global coordination. Most enterprise deployments in 2026 use both in a hybrid model.

Q2: Which is more secure — edge or cloud?

Neither is inherently more secure. Cloud providers offer enterprise-grade perimeter security and compliance certifications, but centralizing data creates a high-value target. Edge reduces data exposure by keeping it local, but introduces more attack surfaces to manage. Your industry’s regulatory requirements should drive the decision.

Q3: How does 5G affect the edge vs cloud comparison?

5G dramatically enhances edge computing’s value proposition by providing the high-speed, low-latency connectivity needed to make distributed edge nodes viable at mobile endpoints — vehicles, wearables, and remote industrial sites — significantly expanding where edge can be deployed effectively.

Q4: What’s the cost difference between edge and cloud?

Cloud follows an OpEx model with low upfront cost but recurring fees that grow with usage. Edge requires higher initial CapEx for hardware but can be more economical over a 3–5 year horizon for high-volume, steady-state IoT workloads. The break-even point depends heavily on data volumes and device count.

Q5: Which industries benefit most from edge computing vs cloud computing?

Industries where milliseconds matter see the greatest returns from edge computing vs cloud computing deployments. Manufacturing, healthcare, autonomous transportation, retail, and energy are leading adopters. A hospital running patient monitoring devices benefits from edge’s local real-time processing, while its billing, records management, and analytics workloads sit comfortably in the cloud. The industry itself doesn’t determine the choice — the specific workload within that industry does.

Q6: Can small businesses realistically implement edge computing vs cloud computing together?

Yes, but only when the use case justifies it. In the edge computing vs cloud computing decision for small businesses, the hybrid model makes sense if you operate physical locations with IoT devices — think smart retail, connected clinics, or small-scale manufacturing. For most other small businesses, a cloud-first approach is the smarter starting point. Add edge only when a real latency or connectivity problem demands it, not as a default infrastructure choice.

Q7: How does edge computing vs cloud computing handle data storage differently?

In the edge computing vs cloud computing model, storage responsibilities are split by design. Edge nodes store data temporarily — just long enough to process it and act on it locally. Cloud handles long-term storage, backup, historical analysis, and large-scale data lakes. This division keeps edge nodes lean and fast while giving the cloud enough data to power reporting, AI training, and business intelligence. Getting this storage boundary right is one of the most critical decisions in any hybrid architecture.

Final Verdict on Edge Computing vs Cloud Computing

Here’s the practical decision framework:

Choose cloud if your workload is unpredictable or bursty, you need global reach, you’re a startup, or you lack on-site IT resources.

Choose edge if your application requires sub-20ms latency, you operate in low-connectivity environments, you’re deploying large-scale IoT infrastructure, or data sovereignty and compliance require local processing.

Choose hybrid if you’re an enterprise with mixed workloads, your IoT deployments are growing, or you need the flexibility to route workloads dynamically based on real-time latency and cost signals.

The edge computing vs cloud computing debate has no universal winner — only the right fit for the right workload. The businesses that thrive will be those that treat this as an architectural strategy, not a binary choice. Evaluate your latency requirements, data volumes, compliance obligations, and team capabilities — then build accordingly.